Agentic AI and Generative AI. We are living in the golden age of “Generative AI.” Since 2023, we have marveled at tools like ChatGPT and Gemini that can write poetry, debug code, and generate photorealistic images from a simple text prompt. It changed how we create.

However, if you look closely at your smartphone usage in late 2025, a frustrating truth remains: your “Smart” phone is still fairly passive. It waits for you to tap, scroll, and confirm every single action. If you want to book a flight, you are still the one opening the app, selecting the dates, and entering your credit card details. The AI might suggest a destination, but it doesn’t take you there.

That is about to change. As we look toward the flagship technology of 2026—led by upcoming devices like the Samsung Galaxy S26 series, the Pixel 11, and the next-gen iPhones—we are entering the era of Agentic AI.

This isn’t just an upgrade; it is a fundamental shift in computing. We are moving from AI that chats to AI that acts.

What is Agentic AI? (The “Doer” vs. The “Talker”)

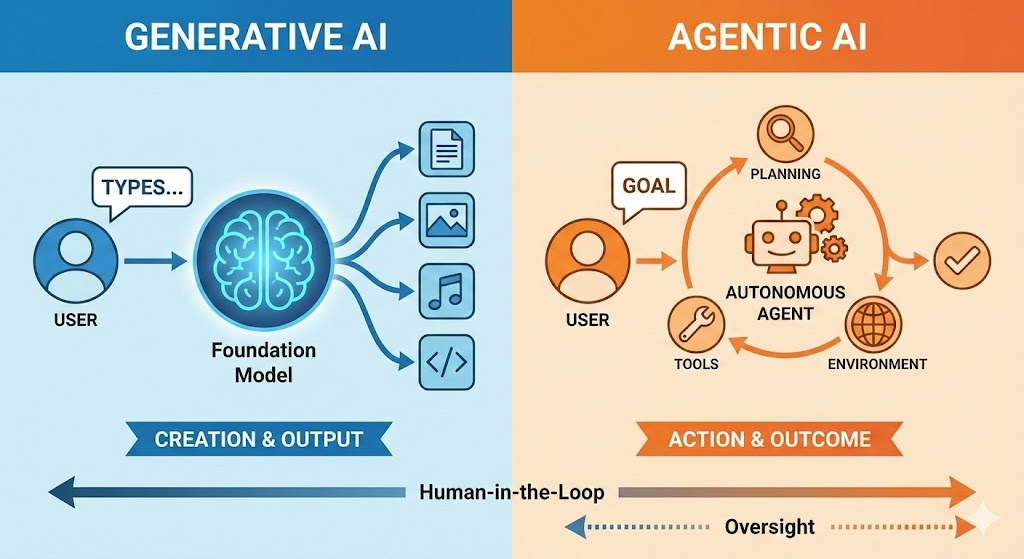

To understand the magnitude of this shift, we need to distinguish between the two types of Artificial Intelligence dominating the conversation:

- Generative AI (The Creator): This is what we have now. You ask it to write an email, and it drafts the text. It provides output, but you must take that output and use it.

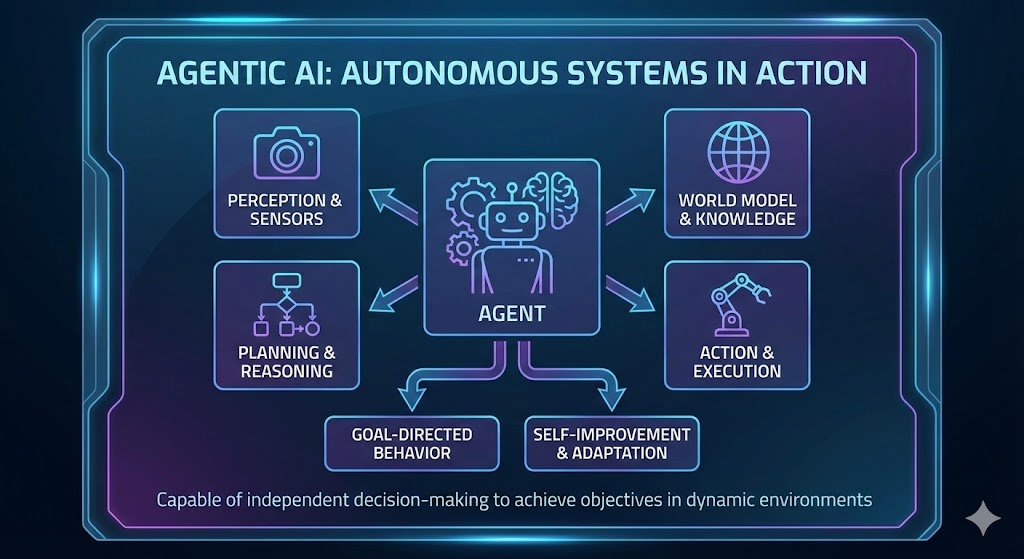

- Agentic AI (The Agent): This system is designed for autonomy. It perceives its environment (your apps, your screen, your location), reasons about what needs to be done, creates a plan, and executes it using tools (other apps).

The Core Difference: If you ask Generative AI, “Find me a cheap flight to Delhi,” it will give you a list of flights and prices. If you ask Agentic AI the same question, it will reply: “I found a flight on Indigo for ₹5,500 departing at 10 AM. It fits your calendar gap. I have filled in your details. Shall I press ‘Pay’?”

Agentic AI closes the loop. It doesn’t just retrieve information; it performs actions in the real world (or at least, the digital real world).

The 2026 User Experience: A Day in the Life

How will this actually feel in your hand? Imagine unboxing a new flagship phone in early 2026. The “Assistant” isn’t a voice in a speaker anymore; it’s an operating layer that sits above all your apps.

1. The End of “App Fatigue”

Currently, ordering a complex dinner involves toggling between WhatsApp (to ask friends what they want), a food delivery app (to find the restaurant), and a payment app.

With Agentic AI: You simply say, “Order Chinese for the family, but make sure it’s from a place that has good ratings for Veg Manchurian.” The Agent:

- Scans your previous order history to know what “family” usually implies in quantity.

- Filters restaurants on Swiggy or Zomato by rating specifically for “Veg Manchurian” (reading reviews, not just stars).

- Builds the cart.

- Presents you with a final summary for a single biometric confirmation.

2. The Intelligent Travel Agent

Planning a holiday is usually a multi-hour ordeal. With Agentic AI: You say, “Book a weekend trip to Coorg for next month. Budget is ₹20,000.” The Agent checks your calendar for free weekends. It compares bus vs. cab costs. It finds a homestay that matches your preference for “nature views” (based on your photo gallery). It presents three complete packages. You pick one, and it books the transport and accommodation instantly.

3. Customer Service Negotiator

This is perhaps the most anticipated feature. With Agentic AI: You need a refund for a failed transaction. Instead of you waiting on hold for 40 minutes, your AI Agent calls the customer support line. It navigates the “Press 1 for English” menu, waits on hold, and speaks to the representative using your details to file the complaint. It only notifies you when the refund is processed.

The Technology: What Makes This Possible Now?

Why didn’t we have this in 2024? The bottleneck was hardware and software integration. Two major breakthroughs are fueling the 2026 Agentic boom:

The Rise of the NPU (Neural Processing Unit)

Agentic AI requires massive processing power. It needs to “see” your screen and understand context in real-time. Sending every screen click to the cloud is too slow and a privacy nightmare. The processors powering 2026 phones are built with massive NPUs designed specifically to run these agents on-device. For instance, the MediaTek Dimensity 9400 was one of the first chips explicitly marketed with an “Agentic AI Engine,” capable of running these complex tasks locally without draining the battery.

Large Action Models (LAMs)

We are familiar with Large Language Models (LLMs) like GPT-4. The new frontier is LAMs—Large Action Models. These models are trained not just on text, but on interfaces. They are taught how to click buttons, scroll lists, input text into fields, and navigate dropdown menus just like a human does. Companies like Rabbit, Google, and OpenAI are racing to perfect these models.

The Big Players: Who Will Win the Race?

- Google (Project Astra / Gemini): Google is arguably best positioned here because they own the OS (Android) and the apps (Maps, Gmail, Calendar). Their “Project Astra” demo showed an AI that can see the world through your camera and remember where you left your glasses. In 2026, expect deep integration where Gemini controls Android completely.

- Samsung (Galaxy AI): Samsung has been aggressive with AI. Rumors suggest the Galaxy S26 will feature a customized version of Agentic AI that focuses on productivity—optimizing battery based on your specific usage patterns and managing files across the Samsung ecosystem.

- Apple (Apple Intelligence): Apple’s strategy relies on “App Intents.” They are forcing app developers to let Siri “hook” into their apps. If developers comply, Siri becomes the ultimate agent on the iPhone 17 series.

The Economic Impact: The Death of the Interface?

This shift brings a fascinating question for the tech industry: If an AI uses the app for me, do I need to see the app?

For the last 15 years, companies have spent billions designing pretty user interfaces (UI) to keep us addicted to their apps. But if an Agentic AI is booking your Uber, you never see the Uber map. You never see the ads on the Zomato loading screen. You never scroll past the promoted products on Amazon.

We might be moving toward a “Zero-UI” future, where the primary interface is just a conversation with your agent. This is scary for advertisers but liberating for users.

The Privacy Dilemma: The Cost of Convenience

The capabilities of Agentic AI sound like magic, but they require a level of access that makes many uncomfortable. For an agent to be truly useful, it needs to know:

- Your financial details (to pay for things).

- Your passwords (to log in).

- Your health data (to book doctors).

- Your real-time location.

The “Trust” Guardrails: In 2026, the selling point of a smartphone won’t just be the camera megapixels; it will be the Privacy Vault. We expect to see “Permission Scopes” where you grant the AI temporary agency.

- Example: “Allow Agent to access Credit Card ONLY for this specific transaction, then revoke access.”

Furthermore, On-Device Processing becomes non-negotiable. If your phone is booking a doctor’s appointment, that data must be processed locally on your phone’s chip, never leaving the device to train a cloud model.

Conclusion: Preparing for the Agentic Era

As we sail through late 2025, the “Smart” phone is finally graduating. It is evolving from a reactive screen of icons into a proactive, intelligent partner.

For the readers of Smart Explorer, this means the next upgrade cycle (2026) will be the most significant since the invention of the touchscreen. We are about to hand over the boring, repetitive parts of our digital lives to machines, leaving us with the one thing technology can’t buy: Time.

Are you ready to let an AI handle your life, or will you keep the controls? The choice arrives next year.

Read our recent blog reviewing Meta Ray-Ban smart glasses.

FAQS

Q: What is the difference between ChatGPT and Agentic AI? A: ChatGPT is a Generative AI—it creates text or images based on prompts. Agentic AI is designed to take action; it can navigate apps, click buttons, and complete tasks like booking tickets or sending money on your behalf.

Q: Will Agentic AI be available on current phones? A: Some features may arrive via software updates, but true Agentic AI requires powerful on-device NPUs (Neural Processing Units). It will likely be a headline feature for 2026 flagship devices like the Samsung Galaxy S26 and iPhone 17.

Q: Is Agentic AI safe for banking? A: Security is the biggest hurdle. Future implementations will rely on “On-Device Processing,” meaning your banking credentials are used by the AI chip inside your phone and are never sent to the cloud.

Leave a Reply to Hari Cancel reply